Tokenmaxxing, Promomaxxing, and Misaligned Incentives in Tech

When a measure becomes a target, it ceases to be a good measure.

Engineer’s Codex is a publication about real-world software engineering.

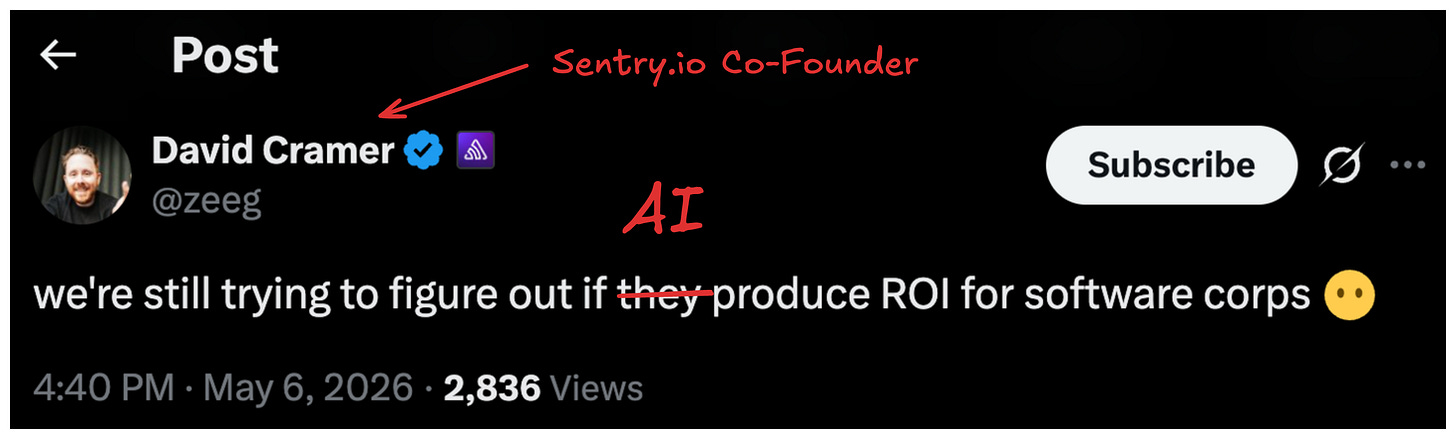

Coinbase did layoffs recently. They’re cutting a large percent of their employees, and along with the announcement, they mentioned wanting their employees to use AI more. Basically, they want people to tokenmaxx.

If you don’t know what tokenmaxxing is, its the idea of maximizing the amount of tokens you use when working with AI. Basically, use AI a lot! And it’s generally seen as a good thing. The more tokens you use should, in theory, mean the more productive you are.

This isn’t always true.

When a measure becomes a target, it ceases to be a good measure

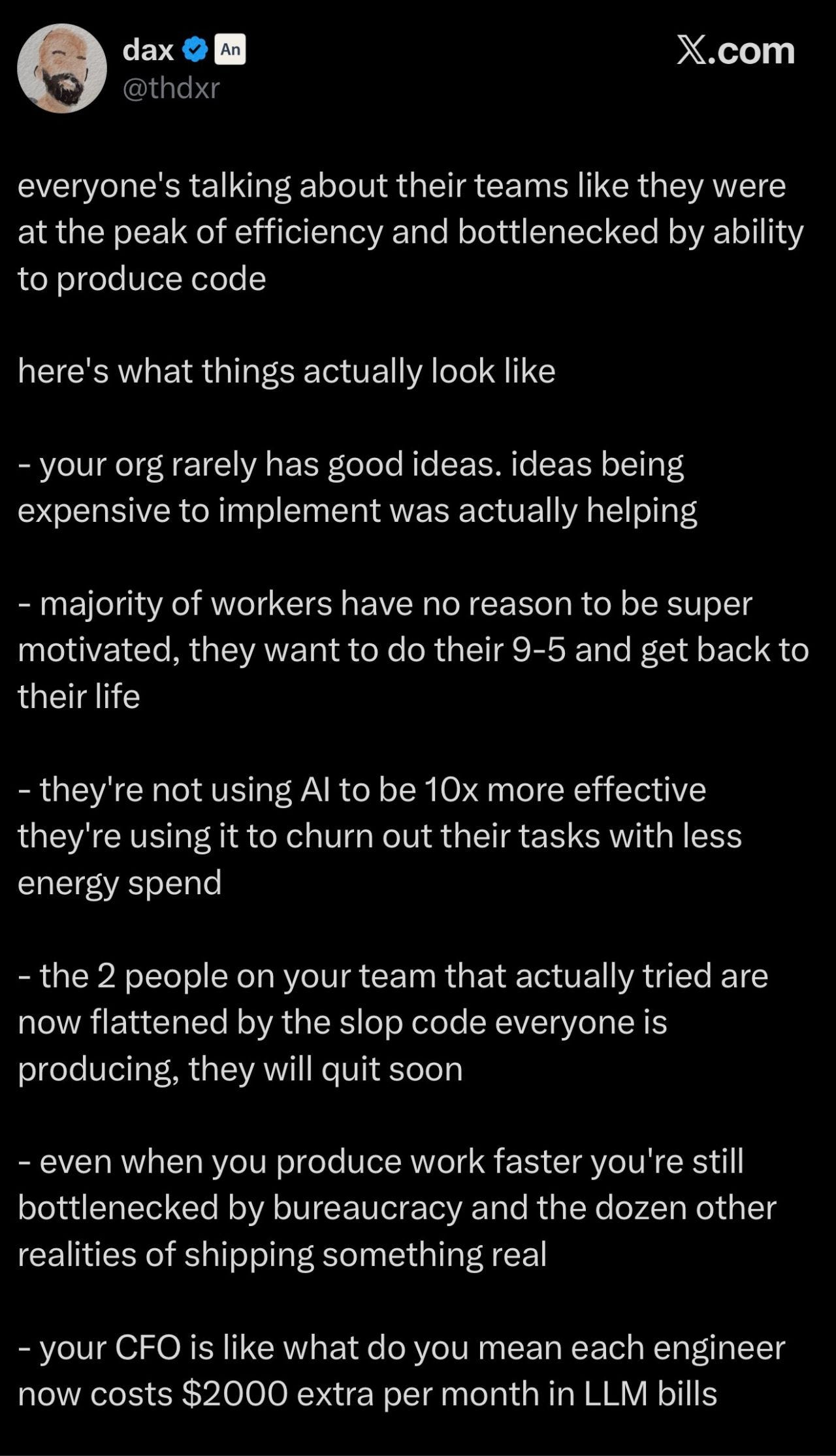

Meta actually created an internal leaderboard that counted the amount of tokens people were consuming. You would think that the people consuming the most tokens are generally the most productive.

But here’s the problem: anything that is measured can and will be gamed (Goodhart’s Law: “When a measure becomes a target, it ceases to be a good measure.”), especially by smart, pragmatic people, like Meta engineers. Smart engineers optimize for the best personal outcomes, which usually means a promotion, more money, more scope. If tokenmaxxing is the path to get there, they will do that (more visibility, higher number is good).

So people started setting up scripts to burn millions of tokens for literally zero productivity. Just burning tokens to do nothing. Meta eventually shut the leaderboard down because it created the wrong incentive.

Promomaxxing

Tokenmaxxing actually reminds me of a famous complaint about Google: specifically how hard it is to get promoted there and how that led to a lot of misaligned incentives. You could call it promomaxxing.

Googlers would make things more complex than needed, write way more docs than needed, and make those docs much longer than needed, all to manufacture the appearance of hard, complex work. Because at Google, if your project wasn’t technically complex enough or there weren’t enough of them, you weren’t getting promoted.

In theory, this makes sense. People who get promoted should be doing harder and harder things.

However, good software engineering should lean toward simplicity. But promotion rewards complexity. And as frameworks and developer infrastructure keep making engineering simpler (which is genuinely good), engineers run out of adequate complexity to justify their promotions. So you get these irrational decisions for the business that are completely rational decisions for the individual. That’s a textbook misaligned incentive.

The Cobra Effect of Perverse Incentives

The most famous example I can think of in history of perverse incentives is the Cobra Effect in India.

During British rule in India, the government was concerned about the number of venomous cobras. They offered a bounty for every dead cobra brought to them.

Enterprising citizens began breeding cobras specifically to kill them and collect the reward.

When the government realized this and scrapped the bounty, the breeders released their now-worthless snakes, leaving the cobra population higher than when the program started.

The Input → Output → Outcome Discrepancy

Tokenmaxxing has the same problem. Good intentions, wrong incentive structure.

Tokenmaxxing is built on the idea of:

input → output → outcome.

More input should produce more output, which should drive better outcomes. This framing actually comes from this article by Arnav Gupta on Twitter, and I think he expressed it well.

The issue is that input to output always has some loss. You put in 100% input and you might get anywhere from 50% to 150% output, because sometimes that input is thinking time, debugging, exploration. It’s not a clean conversion.

Outcome is even further removed. Output doesn’t necessarily even correlate to outcome. In fact, output can even lead to negative outcomes.

Just because you shipped a feature doesn’t mean you moved a metric positively. If your goal is to increase retention and you built a notifications feature, the outcome you want is higher retention. But more notifications doesn’t guarantee that. If users already have notifications and you add more, you might actually annoy them and hurt retention. There is no guarantee that more output even produces a positive outcome.

There are even worse examples. Say you, as a Google engineer, spend a ton of input to ship a system and that system has bugs that take down Google’s ad platform for two hours. Google just lost $5 million. Your output was a net negative. Was tokenmaxxing worth it in this case? Tokenmaxxing does also result in lower quality output, on average. Can we really guarantee better outcomes from more rapid input?

This is where input quality does matter. And generally, tokenmaxxing degrades input quality.

Slow coding was a feature, not a bug. It required clearer thinking and higher-quality inputs.

.When a CEO or PM had 10 ideas and the team could only do 2, you were forced to debate. You had to fight for your idea, kill the weak ones early, and actually pressure test what was worth building. The constraint created a filter. Now that code is basically free and fast, that filter is gone. Yes, an MVP is valuable data.

Another note is that tokenmaxxing does not just 5x your output. It can also 5x’d your noise. More features, more bugs, more teams building overlapping things in different ways, more meetings to align on stuff that should have been killed in a Slack thread. The alignment tax went up at the exact same time the coding cost went down.

These are really recent, really clear examples of misaligned incentives. And misaligned incentives are hard problems because they’re people problems. You’re trying to optimize for multiple things at once, and it’s leadership’s job to lay the dominoes in the right way.

Some startups like Anthropic have naturally aligned incentives. If an engineer tokenmaxxes using Claude, Anthropic just gets paid more. They don’t really care about your output or your outcome. They care about their own outcome, which just happens to be directly correlated to your input. To you, they sell the potential outcome and you purchase the guaranteed tokens.

SWE Quiz (Featured)

SWE Quiz is a structured crash course of everything you need to know for system design and modern AI engineering interviews. It contains thousands of questions that have been asked in interviews at DeepMind, OpenAI, Anthropic, and more.

Where Tokenmaxxing Excels

Now, I’m not against using AI at all. I use AI extensively. In fact, I would say probably 95% of the code generated by me is AI generated. So this is actually not an argument against using AI at all. It’s actually more of an argument towards misaligned incentives and understanding where token maxing as a behavior may have good intentions, but a wrong implementation. At the end of the day, we just do want better outcomes. And understanding how to token max in the right ways is important for better outcomes.

A great example from a friend at work that I heard recently was they had to run a bunch of experiments on reducing some latency for their system. Previously they would have to guess at these experiments and pick the top three. But with AI, he was actually able to add flags and set up experiments to test all seven of his ideas. And it turns out probably one of the experiments that he would not have tried earlier actually ended up being one of the better latency reduction wins.

Tokenmaxxing is great for cases like these, for rapid exploration and throwaway work in favor of an outcome, where the goal is knowledge. In this case, the outcome is guaranteed.

There is a cost to that outcome, which previously was human time, but now you have less human time needed, but token costs added on top. So the calculus has changed, and will continue changing over time.

My Takeaways

All this is to say that incentive alignment is really hard. It’s a constant struggle at all levels of leadership, but I have found that the best leaders and companies I’ve worked at excelled at aligning incentives as much as possible.

For example, promotions across the board in tech are focused on outcomes and seem to be more focused on outcomes nowadays. This may be due to the turmoil of tech too - outcomes are harder to achieve, and thus allows companies to promote less.

Tech is full of missionaries and mercenaries. Generally, there are more mercenaries than missionaries. Mercenaries will always optimize for the path to promotion and money. If that path is misaligned with the company’s health, the fault lies with the incentive structure, not the employees.

It’s worth framing things in your work with incentive alignment. I’ve found it an useful exercise for lining up things for promotions, getting cross-functional collaboration done, and, in general, getting “champions” across orgs for both me and my work.

My only sadness is that you didn't offer an alternative. Which I understand, it's genuinely hard to _find_ an alternative. But I'll keep looking.

There's a very old movie (70's) about a country that's having power-grid problems and is forced to limit consumption. This becomes a political problem.

So the politicians seek a fix - a trick - anything to get this issue off of the table.

They turn to a plumber, who happens to pass by the hallway, and ask him how to fix it.

He suggests they install a little dynamo inside every toilet in every house. So that when people flush they will generate power.

The politicians rush to execute and soon every home has a toilet-dynamo. Then in a grand TV live show, they ask everyone in the country to flush all at once.

Amazing! Power flows! and then dies down. So they ask the people to flush again!

and again ... and again.

And then the country runs out of water.

It's hilarious.